Predicting Influenza Virulence with Machine Learning

Proposal

Machine learning (ML) excels at creating models of the interactions between many weak correlations that may elude lower-dimensional statistical analysis. An example of a network of such interactions is the multifactorial sequence properties that determine the phenotype of a virus, such as influenza, in a given host. Although ML on viral sequence features has been used to predict more effective antiretroviral combinations for HIV 1, identify genetic markers for host selectivity within families of viruses 2, and refine genotyping strategies for Hepatitis C virus 3, the use of ML algorithms to predict the pathogenicity, infectivity, transmissibility, and vaccination response of an uncharacterized influenza strain from viral genomic sequence is still in its infancy 4,5,6. It is widely understood that these properties of influenza virus as they manifest within a specific host are complex polygenic traits, currently characterized as a collection of genetic mutations 7 isolated from wild strains whose singular effect on virulence are further characterized in animal studies, e.g., the N66S mutation in the proapoptotic PB1-F2 viral protein that increased the virulence of the 1918 Spanish Flu virus 8. Multivariate analyses of the interactions between observed mutations are not commonly published, however. One such meta-analysis in 2009 used 69 genomic sequences of H5N1 avian influenza to create a Bayesian graphical model inferring their virulence in mammals and confirmed that virulence is directly influenced by mutations in at least four genes, with at least two mechanisms requiring particular mutation combinations 9.

The ability to predict changes in virulence properties in animal reservoirs of influenza and likely mutations that would cause transmission to humans would have a profound effect on our ability to take preventative measures against the outbreak of hypervirulent influenza like the strains causing pandemics in 1918-1919 and 1957-1958 with tens of millions of casualties, and more recently tens of thousands of deaths resulting from reemergence of an older strain in 1977 and triple reassortment in 2009 7. For example, the current vaccination strategy could be enhanced for generation of immunity against not only previously known strains on the rise, but predicted future pandemic strains. The novel triple-reassortment strain that produced the 2009 H1N1 pandemic was identified too late to be included in that season’s trivalent vaccine 10, requiring rapid development of a second vaccine at an additional cost of $2 billion 11. While some computational models of influenza virulence 12 and mutation 6 take a highly structural approach (e.g., based on antigen-receptor binding affinity), we propose that an ML algorithm modeling these phenomena should be constructed on phenotype-genotype correlation data for three reasons. Firstly, many mutations that are known to affect influenza virulence occur in the viral polymerase complex (PB1 and PB2) or the IFN antagonist (NS1) 7,9, and these are not captured by the cited models which focus on the hemagluttinin protein (HA). Secondly, genotypic and phenotypic data on influenza isolates are being actively concentrated into several online public databases: the GISAID, the Influenza Virus Resource, and the Influenza Research Database (IRD), from which data should be pipelined to continually inform any systematic model of influenza so that it may remain up to date. Thirdly, depending on the selection of the ML technique, a human-interpretable model created by the training process could facilitate biological interpretation of the results, e.g., an ML analysis of host selectivity in Picornaviridae showed by mapping the most predictive AA k-mers back to annotated domains that replicase motifs in the polymerases were most discriminative 2.

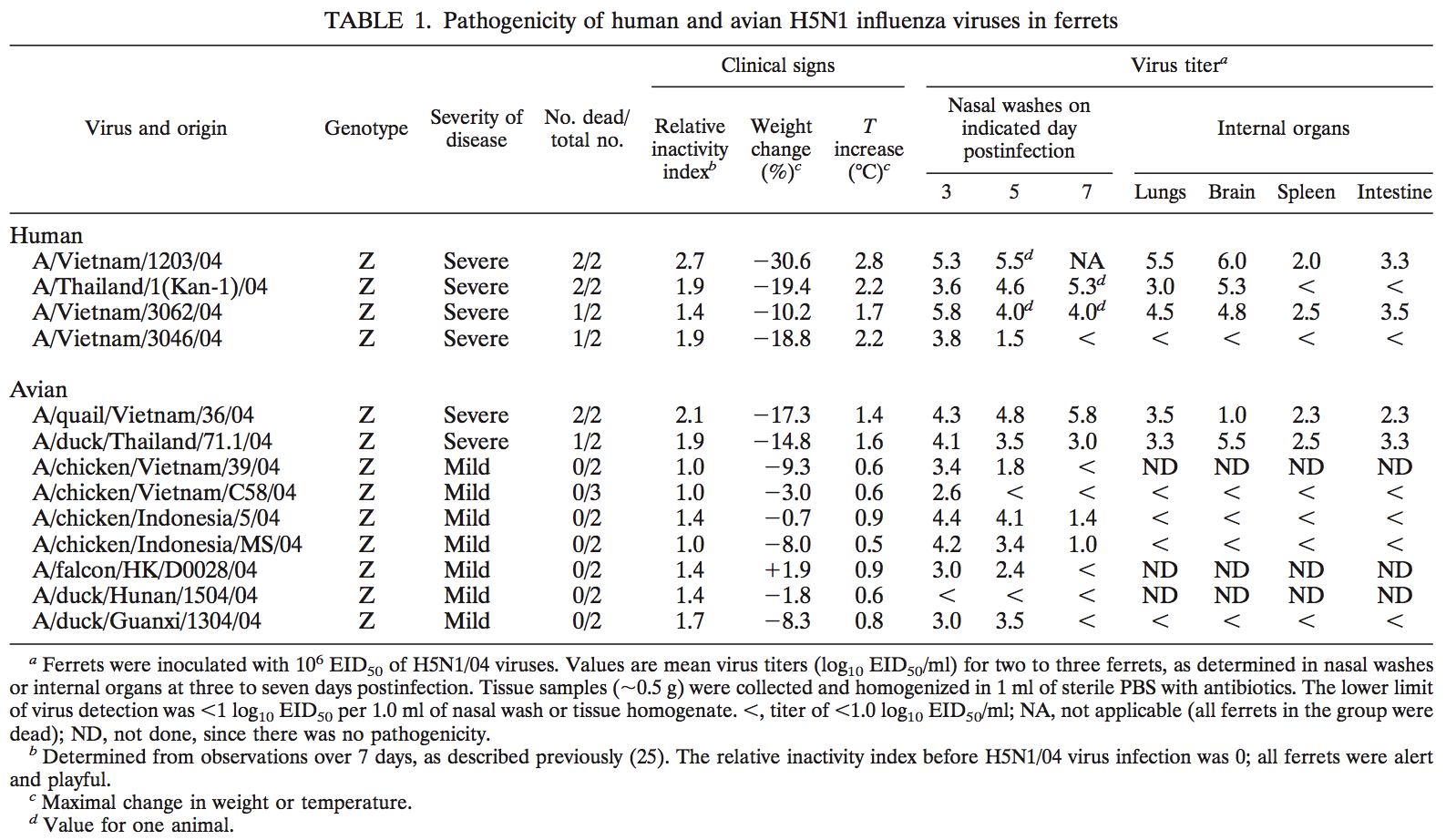

We propose that a decision-tree-based ML algorithm trained on phenotypic data from the IRD will be able to distinguish significant interactions between virulence factors and predict virulence caused by combinations of variants and segments that are currently uncharacterized. Firstly, the associated publication for each of the the phenotype records will be filtered for articles that report pathogenicity data, and for each strain, we will extract data on lethality in the experimental animal cohort, severity of the disease, associated symptoms, and a timecourse of viral titers in various tissues. For example, Govorkova et al. report such data for four human isolates and nine avian isolates in ferrets 13, and other papers in the IRD report analogous results for other strains.

Table 1 from Govorkova et al., illustrating phenotypic data from animal experiments that will be used to train the ML algorithm.

Table 1 from Govorkova et al., illustrating phenotypic data from animal experiments that will be used to train the ML algorithm.

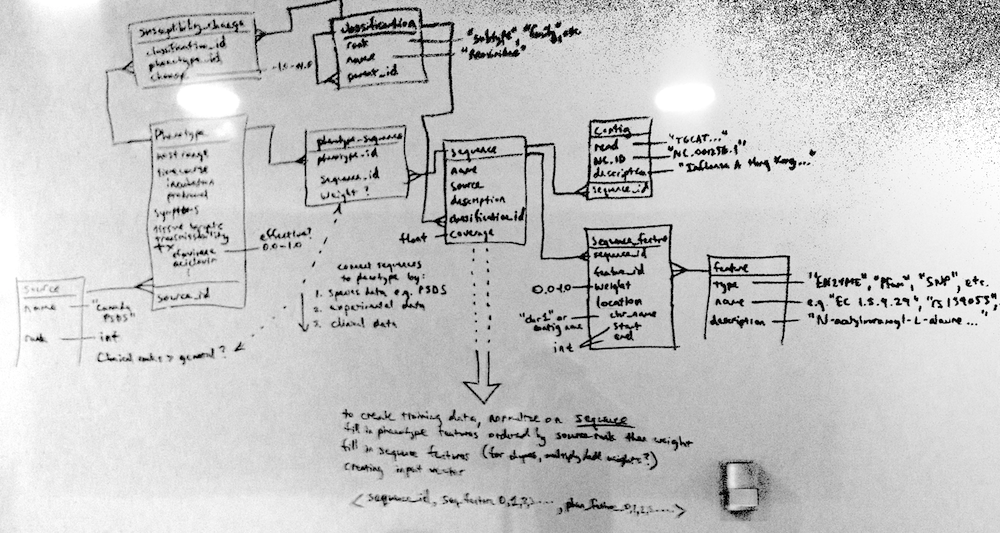

Having loaded this data into a relational database similar to the design seen in Figure 2, we will relate it to sequence data downloaded from IRD or GenBank. Sequence data must be processed to extract genotypic features that will be used by the model. We may do this by simply using the sequencing derived phenotype markers already annotated by IRD (typically AA substitutions), but we may also choose to use FLAN from the Influenza Virus Resource to generate a feature table, or align the sequences to a generalized reference assembly or Hidden Markov Model 14,15 and extract features according to our own criteria. This could include raw k-mers of AAs or nucleotides, SNPs from the nucleic acid sequence, deleteriousness of these SNPs as predicted by PolyPhen 16 or similar, functional domains predicted within ORFs, codon usage bias within these domains, and more complex measures that attempt to capture the proximity of pairs of features.

A relational data model for capturing pathogenic genotype-phenotype associations (apologies for cameraphone quality)

A relational data model for capturing pathogenic genotype-phenotype associations (apologies for cameraphone quality)

We will then extract (in a process analagous to denormalization of the sequence table in Figure 2) vectors of sequence features and phenotype features with which we can train a machine-learning algorithm. A decision-tree based algorithm, such as probabilistic decision trees, random forest or alternating decision trees, will be trained on these vectors to produce a model. The model can be tested by taking sequence data for an unknown strain, processing it via the same feature extraction pipeline used for the training set, and running the model on this vector of features. We can validate this model internally via ten-fold cross-validation and externally via analysis of strains that are not yet in the IRD’s phenotype database but for which experimental or epidemiological data in the literature strongly suggests a correct phenotype.

Assuming our model holds up to internal and external validation, the decision tree or ensemble of decision trees produced by ML can be examined to determine interesting combinations of features that we predict will produce significant changes in virulence. The biological significance of these combinations can potentially be experimentally verified in animal models. Furthermore, by starting with the phenotypic prediction for a particular virus sequence and varying small combinations of features at a time, we can predict the changes that would cause the greatest change in virulence for that virus. This can be performed on the entire GenBank influenza library to predict which sequenced strains are predicted to already be the most virulent in humans, and which will increase most in virulence after a small number of genetic alterations.

We can then present our model to the internet via a web interface, allowing analysis of arbitrary influenza sequences using our pipeline, submission of new phenotypic data, and visualization of predictions for the GenBank influenza library. Sequence data is frequently the first informative data available for an emerging pathogen. Here, we hope to produce a model for influenza virulence based exclusively on sequence data that will produce for any given viral sequence, based on the most up-to-date experimental evidence curated in the IRD, 1) a likelihood of the unknown strain’s danger to humans and 2) the number and location of mutations that would most likely increase its virulence, allowing an assessment of whether it will evolve to be dangerous in the near future.

-

Lengauer T. Bioinformatical Assistance of Selecting Anti-HIV Therapies: Where Do We Stand? Intervirology. 2012;55(2):108–112. doi:10.1159/000332000. ↩

-

Raj A, Dewar M, Palacios G, Rabadan R, Wiggins CH. Identifying Hosts of Families of Viruses: A Machine Learning Approach. PLoS ONE. 2011;6(12):e27631. doi:10.1371/journal.pone.0027631.s004. ↩

-

Hraber P, Kuiken C, Waugh M, Geer S, Bruno WJ, Leitner T. Classification of hepatitis C virus and human immunodeficiency virus-1 sequences with the branching index. Journal of General Virology. 2008;89(9):2098–2107. doi:10.1099/vir.0.83657-0. ↩

-

Attaluri PK, Chen Z, Weerakoon AM, Lu G. Integrating Decision Tree and Hidden Markov Model (HMM) for Subtype Prediction of Human Influenza A Virus. In: Shi Y, Wang S, Peng Y, eds. Cutting-Edge Research Topics on Multiple Criteria Decision Making. Springer; 2009:52–58. ↩

-

Trtica-Majnaric L, Zekic-Susac M, Natasa Sarlija, Vitale B. Prediction of influenza vaccination outcome by neural networks and logistic regression. Journal of Biomedical Informatics. 2010;43(5):774–781. doi:10.1016/j.jbi.2010.04.011. ↩

-

Xia Z, Das P, Huynh T, Royyuru AK, Zhou R. Modeling mutations of influenza virus with IBM Blue Gene. IBM J Res & Dev. 2011;55(5):7:1–7:11. doi:10.1147/JRD.2011.2163276. ↩

-

Tscherne DM, García-Sastre A. Virulence determinants of pandemic influenza viruses. J Clin Invest. 2011;121(1):6–13. doi:10.1172/JCI44947. ↩

-

Conenello GM, Zamarin D, Perrone LA, Tumpey T, Palese P. A Single Mutation in the PB1-F2 of H5N1 (HK/97) and 1918 Influenza A Viruses Contributes to Increased Virulence. PLoS Pathog. 2007;3(10):e141. ↩

-

Lycett SJ, Ward MJ, Lewis FI, Poon AFY, Kosakovsky Pond SL, Brown AJL. Detection of Mammalian Virulence Determinants in Highly Pathogenic Avian Influenza H5N1 Viruses: Multivariate Analysis of Published Data. Journal of Virology. 2009;83(19):9901–9910. doi:10.1128/JVI.00608-09. ↩

-

Lambert LC, Fauci AS. Influenza vaccines for the future. N Engl J Med. 2010;363(21):2036–2044. doi:10.1056/NEJMra1002842. ↩

-

Nabel GJ, Fauci AS. Induction of unnatural immunity: prospects for a broadly protective universal influenza vaccine. Nature Publishing Group. 2010;16(12):1389–1391. doi:10.1038/nm1210-1389. ↩

-

Goh G, Dunker AK, Uversky VN. Protein intrinsic disorder and influenza virulence: the 1918 H1N1 and H5N1 viruses. Virol J. 2009;6(1):69. doi:10.1186/1743-422X-6-69. ↩

-

Govorkova EA, Rehg JE, Krauss S, et al. Lethality to Ferrets of H5N1 Influenza Viruses Isolated from Humans and Poultry in 2004. Journal of Virology. 2005;79(4):2191–2198. doi:10.1128/JVI.79.4.2191-2198.2005. ↩

-

Kuiken C, Yoon H, Abfalterer W, Gaschen B, Lo C, Korber B. Viral Genome Analysis and Knowledge Management. In: Methods in Molecular Biology. Totowa, NJ: Humana Press; 2012:253–261. ↩

-

Hughey R, Krogh A. Hidden Markov models for sequence analysis: extension and analysis of the basic method. Comput Appl Biosci. 1996;12(2):95–107. ↩

-

Adzhubei IA, Schmidt S, Peshkin L, et al. A method and server for predicting damaging missense mutations. Nature Publishing Group. 2010;7(4):248–249. doi:10.1038/nmeth0410-248. ↩

The

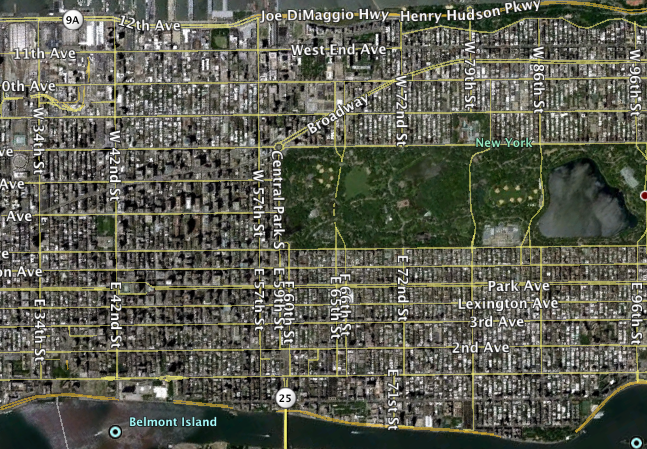

The  Imagery from Google Maps.

Imagery from Google Maps. Get on 3rd Avenue in the center lane and you will practically fly uptown. Taken from

Get on 3rd Avenue in the center lane and you will practically fly uptown. Taken from  Hey, it’s the CN tower over there! Should be an enjoyable five-block walk from here. Oh, wait. Taken from

Hey, it’s the CN tower over there! Should be an enjoyable five-block walk from here. Oh, wait. Taken from  Hey, it’s downtown Philadelphia over there! Should be an enjoyable five-block walk from here. Oh, wait. Taken from

Hey, it’s downtown Philadelphia over there! Should be an enjoyable five-block walk from here. Oh, wait. Taken from  This used to be a expressway in Boston. Moving it underground was the most expensive US highway project to date. Taken from

This used to be a expressway in Boston. Moving it underground was the most expensive US highway project to date. Taken from  Blocks on blocks on blocks. Imagery from Google Earth.

Blocks on blocks on blocks. Imagery from Google Earth. E 70th in NYC vs. S 12th in Philly.

E 70th in NYC vs. S 12th in Philly.  From left to right, the Bronx, Queens, Manhattan, and Brooklyn. Each tile is at the same scale. Imagery from Google Maps.

From left to right, the Bronx, Queens, Manhattan, and Brooklyn. Each tile is at the same scale. Imagery from Google Maps.

Discuss this post on HN.